Spring, 2026

The Achilles’ Heel

in the Matrix

On the particular humiliation of watching a trillion-parameter model

lose its composure over a 14×14 grid of letters.

There are few Sunday rituals quite as grounding as perfecting a V60 pour-over in a quiet New York apartment and cracking open the first-of-the-month Jane Street puzzle[1]. In the breathless age of generative AI — where neural networks are passing the bar exam and writing functional code before we have finished our morning coffee — there is something wonderfully humbling about watching a trillion-parameter model completely lose its composure over a fourteen-by-fourteen grid of letters.

Jane Street, the quantitative trading firm notorious for its beautifully punishing logic puzzles, seems to relish its role as the ultimate AI foil. Their recent April challenge — a beguiling little matrix of characters and hyphens titled “Can U Dig It?” — is, to a human, a spatial riddle. To a Large Language Model, it is an existential crisis[2].

If you have spent any time asking language models to solve grid-based puzzles, you have likely noticed a spectacular capability gap. We assume that because an AI can parse quantum mechanics, it can certainly manage a word search. But two-dimensional grids, matrices, and spatial reasoning are currently the digital Achilles’ heel of Large Language Models. Here is exactly why the smartest machines in the world still draw a blank when faced with a simple grid — and how one must hold their digital hand, with considerable patience and not a little condescension, to get them across the finish line.

The Tokenisation Blind Spot

When we look at a 14×14 matrix, our peripheral vision instantly relates columns and rows. We immediately understand that Row 2 sits physically beneath Row 1. An LLM does not possess a “mind’s eye.” Thanks to tokenisation, it does not see a square; it sees a long, relentless 196-character noodle of text. If a puzzle requires you to navigate down a column or — heaven forbid — read the grid in a boustrophedon pattern[3], the AI must mathematically calculate index offsets for every single character. One missed hyphen, and the entire spatial reality collapses like a soufflé at a dinner party where someone has slammed a door.

Researchers at Yale confirmed the damage in “Evaluating Spatial Understanding of Large Language Models” — a paper that pitted GPT-4 against humans on grid navigation tasks and discovered that aggregated human accuracy reached 67 percent while GPT-4 managed a rather humbling 29. The model’s errors, tellingly, clustered near topologically close locations — suggesting it almost grasps spatial structure, in the way that a dinner guest who keeps setting the fish fork on the wrong side almost grasps etiquette[4].

The gap extends beyond grids. Ramp Labs has been running a delightful ongoing experiment in which language models from OpenAI, Anthropic, xAI, Google, and Meta play Connect Four against one another — a game that requires precisely the kind of spatial board-state reasoning that LLMs find so vexing. The results are publicly viewable at llmgames.ramp.com, and they are, to anyone who has spent time watching these models confidently miscount columns, both instructive and gently hilarious. Trillion-parameter systems that can draft legislation and explain Wittgenstein cannot reliably determine whether dropping a disc in column four will complete a diagonal. One watches the replays with the same affection one watches a golden retriever attempt to catch a frisbee in a crosswind[5].

In a separate NeurIPS 2024 paper out of Microsoft Research — Mind’s Eye of LLMs — researchers diagnosed the deeper cognitive gap. Humans use a “visuospatial sketchpad” to mentally track movements and shapes. Language models use strictly verbal reasoning. If you ask an AI to mentally navigate a maze, it invariably loses its place because it cannot close its eyes and picture the board. It is, in effect, trying to describe a painting by reading the chemical composition of the pigments — technically complete, spiritually useless[6].

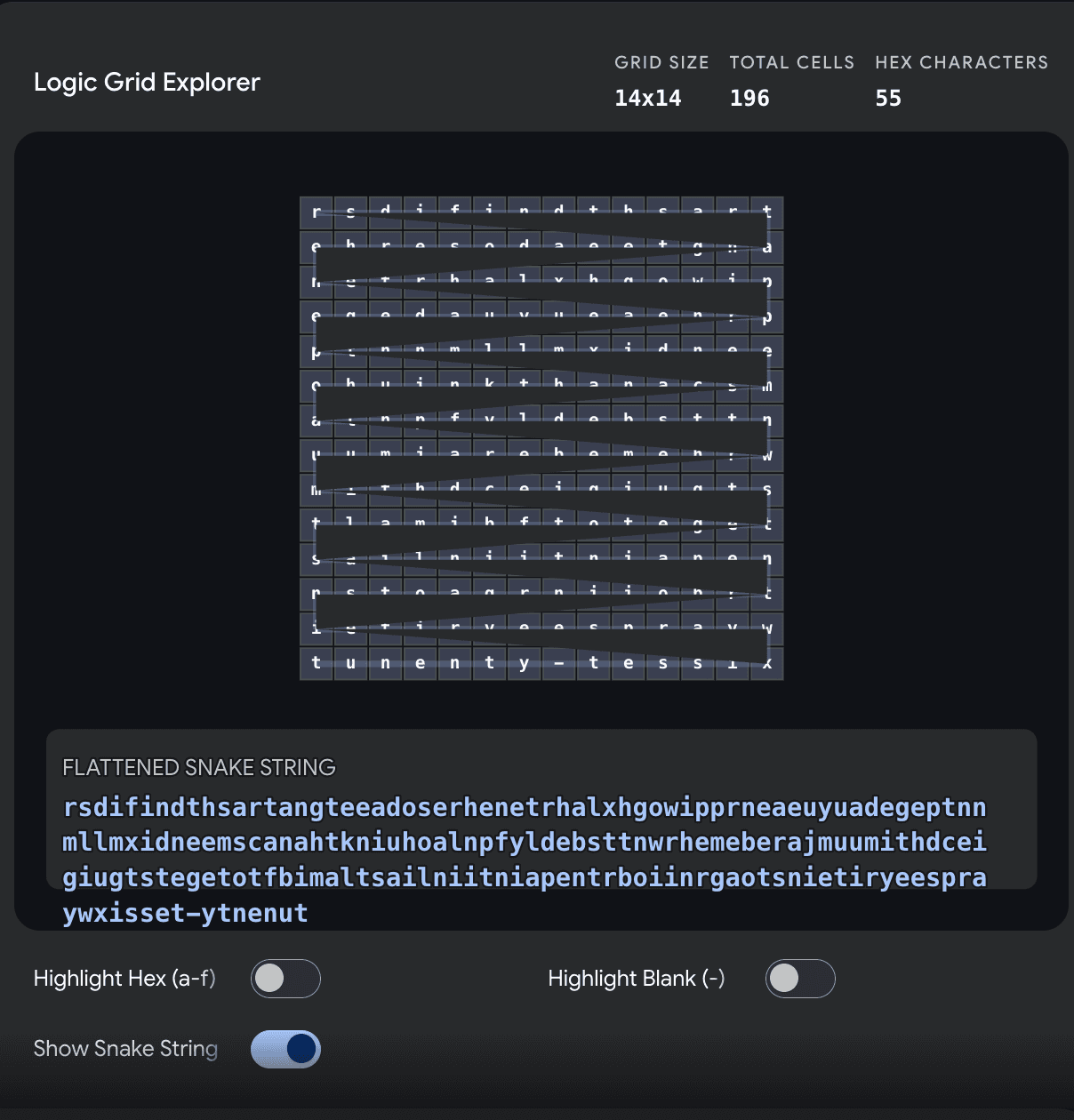

The Logic Grid Explorer: flattening a 14×14 matrix into a one-dimensional snake string,

because two dimensions were, evidently, too many for a trillion parameters.

The Vision Mirage: “Eyes” vs. “Brain”

You might think the obvious workaround is to skip the text prompt entirely and simply upload a screenshot of the grid. Enter the Vision-Language Model — the AI equivalent of giving a philosopher a pair of opera glasses and expecting him to perform surgery[7].

Uploading an image successfully bypasses the tokenisation trap. The model’s vision encoder chops the image into patches, allowing it to finally “see” the spatial layout. But this introduces a fabulous new set of bottlenecks. First, there are high-density hallucinations. A 14×14 grid packed with characters is an invitation to catastrophe. Vision models hallucinate a zero as the letter O with the confidence of a sommelier who has confused Burgundy with Beaujolais — an error that, in certain circles, is grounds for excommunication.

But the truer problem is the disconnect between the “eyes” and the “brain.” Even if the vision encoder perfectly maps all 196 squares, the language backbone still must execute the logic: start at coordinate X, snake backward, count six spaces, repeat. Imagine staring at a grid of letters on a wall across the room and being told to read every sixth letter backward — but you are not permitted to point your finger to keep your place. You would suffer from attention drift. The algorithmic reasoning required to traverse the grid vastly outpaces the model’s ability to maintain focus on the exact visual coordinates step after step[8].

Building the Mental Canvas

So, how does one solve a Jane Street puzzle in the age of AI? One does not ask the AI to be a human. One forces it to be a computer — stripping the problem of everything poetic and reducing it to something that would make a Romantic poet weep and a software engineer nod with grim satisfaction[9].

The Snake Parser (Image to Data). We write a script to destroy the 2D matrix — and I use the word “destroy” with the enthusiasm of someone who has been personally wronged by two-dimensional data structures. We flatten the grid by iterating through the rows, reading even rows left-to-right, and reversing the odd rows. The result: a mathematically pure, one-dimensional string of 196 characters. The matrix is dead. Long live the string.

The Extraction (Finding the Rules). Once flattened into a snake, the hidden English instructions naturally reveal themselves from the noise. Jane Street would have my head if I published the exact sequence here, but let us say they involve finding a starting point and stepping by a specific interval — the sort of discovery that produces the quiet satisfaction of a locksmith hearing the final tumbler click.

The Execution Engine. With our 1D string, complex two-dimensional Cartesian leaps become simple arithmetic: index = index + interval. We set up a while-loop to hop down the string, collecting hexadecimal values and summing them as we land. By turning a visual hunt into a rigid index array, the AI’s hallucination rate drops to zero — which is, I should note, the only context in which “hallucination rate drops to zero” has ever been written about a Large Language Model without irony[10].

The Final Tally

I will not spoil the final positive integer for April’s puzzle — Jane Street’s lawyers are, I imagine, at least as formidable as their puzzles, and I have no wish to test the hypothesis. The joy of the challenge is the a-ha moment when the chaotic noise suddenly aligns into a pristine mathematical path.

But it serves as a rather charming reminder: no matter how advanced our models become, there remain a few Sunday mornings where human intuition and a simple, physical piece of scratchpad paper can outpace billions of dollars of computational power. The machines that write poetry and debug code cannot, as yet, read a word search. There is, I think, a metaphor in there somewhere — but I shall leave it unfound, in the spirit of the puzzle itself[11].

From the desk of,

— S.L.